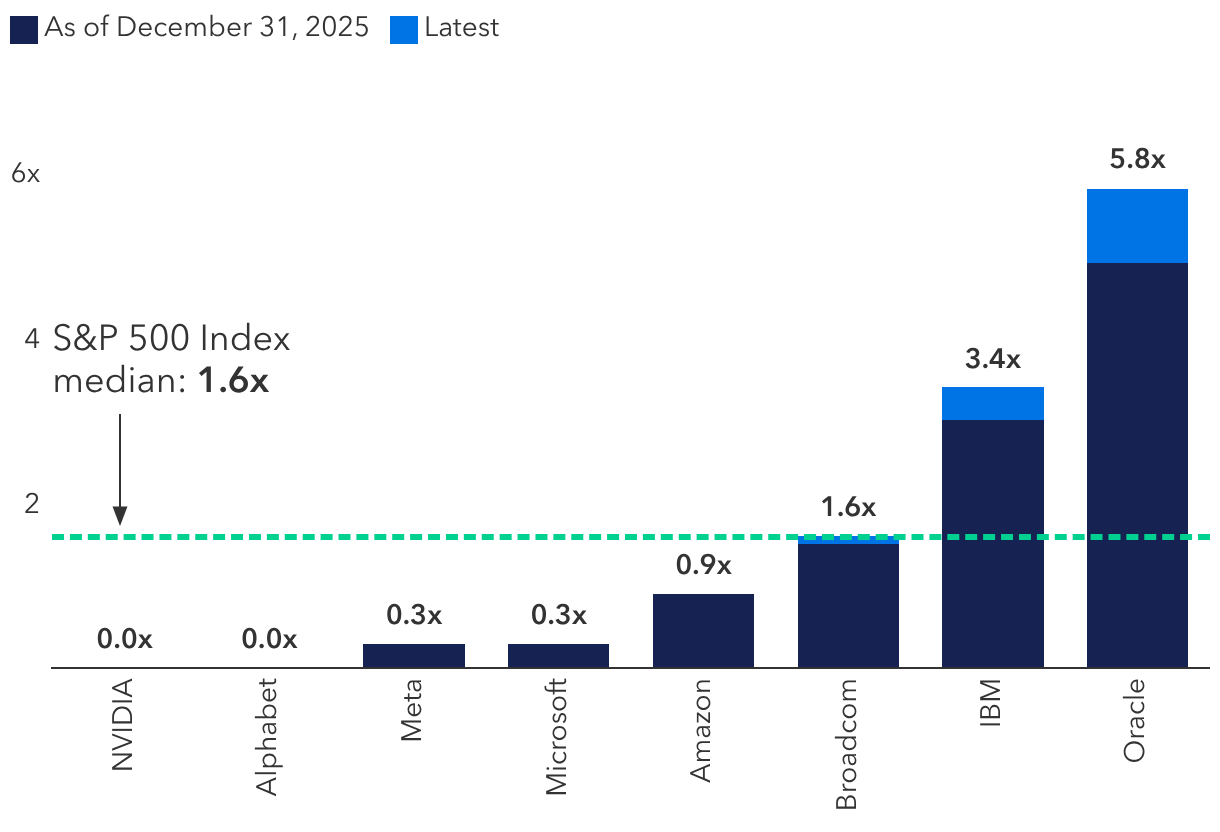

Most of these companies currently have low leverage and are issuing debt to fund AI-related capital expenditure (capex), which helps optimise their capital structure. “Given their sizable cash reserves and healthy cash flows, they can likely fund these projects on their own, even when considering the increased capital expenditure,” McCann explains. “In my view, this reduces the systemic risks considerably.”.

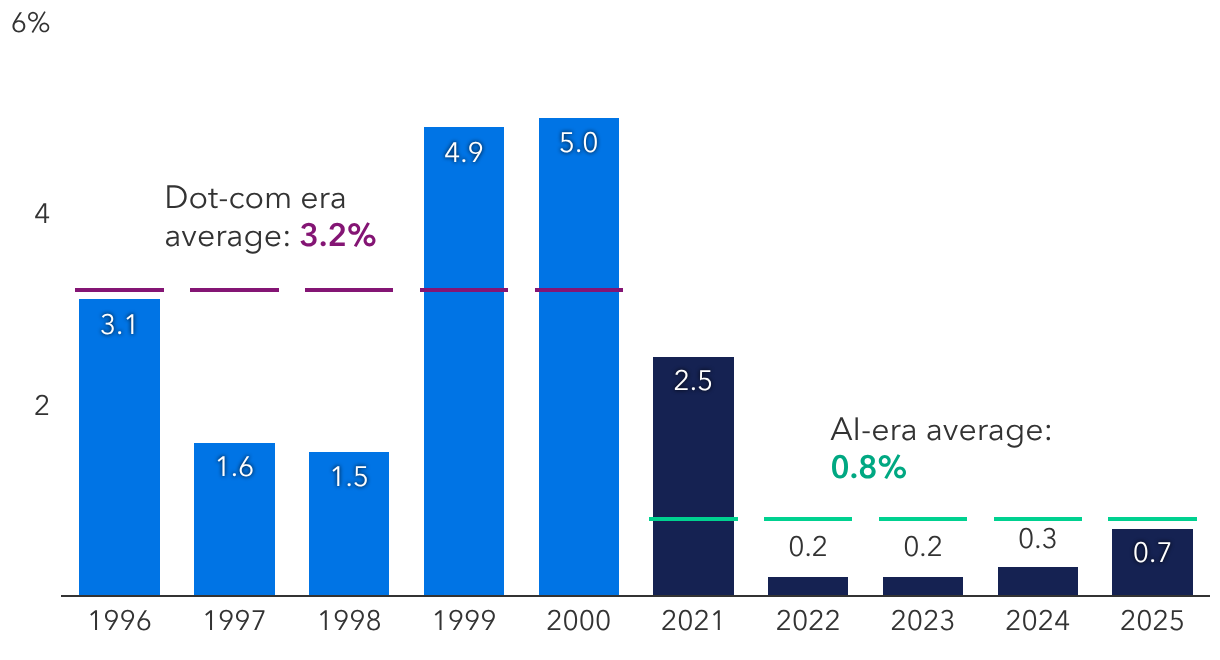

McCann argues this is a sharp contrast to companies in the dot-com era. “Many tech companies in the late 1990s were operating with limited or even negative cash flow, relying heavily on equity issuances and more speculative venture capital,” he says. “Companies like WorldCom piled on debt and increased leverage considerably for their fibre network build-out while Pets.com raised large sums despite unproven demand.”

Importantly, several of today’s investment-grade debt deals have been issued at the parent company level, which offers many advantages, McCann says. Chief among them is that their value is tied to the collective cash flows and worth of the company. For example, Alphabet is the parent company of Google, YouTube, Waymo, DeepMind and other subsidiaries. “It’s an important distinction because you’re not lending to a structure that only exists to fund investments in AI.”

Still, investors have demanded additional yield to own AI-related bonds compared to similarly rated peers, a premium reflecting the large quantum of bonds issued, in addition to modestly higher issuer leverage and uncertainty about whether demand for AI will continue at the current pace.

3. Creative financing

Another concern is so-called vendor financing. Money is looping between the same companies, with start-ups and hyperscalers buying from one another and helping each other grow revenue. Case in point: Amazon and Google each invested billions in Anthropic, an AI systems start-up. In return, Anthropic agreed to use Amazon Web Services and Google’s services and products.

The 1990s saw similar circular arrangements with Lucent Technologies lending excessively to cash-strapped start-ups so they could buy Lucent’s equipment. Those customers eventually were unable to pay, forcing Lucent to restate revenue and take huge write-offs.

McCann believes a near-term house of cards scenario is unlikely for hyperscalers. “Unlike Lucent, they’re lending only a small fraction of their cash flows,” he says. “Their financial strength generally gives them flexibility to pursue alternative ways of funding their expansion plans, which may include off-balance sheet arrangements or project-finance deals.”

Meta, for instance, has a joint venture with Blue Owl Capital called Beignet Investor to build a supersized data centre in Louisiana dubbed Hyperion. Microsoft, meanwhile, has signed short-term deals with data centre providers called neoclouds that are viewed as operating expenses rather than long-term capital investments.

Because the AI build-out is still considered early, these non-traditional deals will likely increase in the coming year, particularly with private credit. “They may be attractive in certain instances but do require additional scrutiny from potential lenders because they are structured to limit the financial risks to the parent company,” McCann explains.

“While I believe in the transformative power of this technology, I’m not in any rush to invest in these deals. It’s going to come down to the individual structure and what the contracts look like, including an evaluation of the financial support provided by the hyperscalers.”

4. Overbuilding

If you build it, the thinking goes, growth will follow. In the early 2000s, telecom companies poured billions into fibre optic cable networks, believing demand for transmitting internet data was unlimited. What happened instead was an oversupply that led to massive asset write-downs and investor losses.

According to US economist Jared Franz, “It is important to remember that overinvesting is a feature, not a bug, of any major advance in technology.” At some point the companies will shift their focus to investing more efficiently.

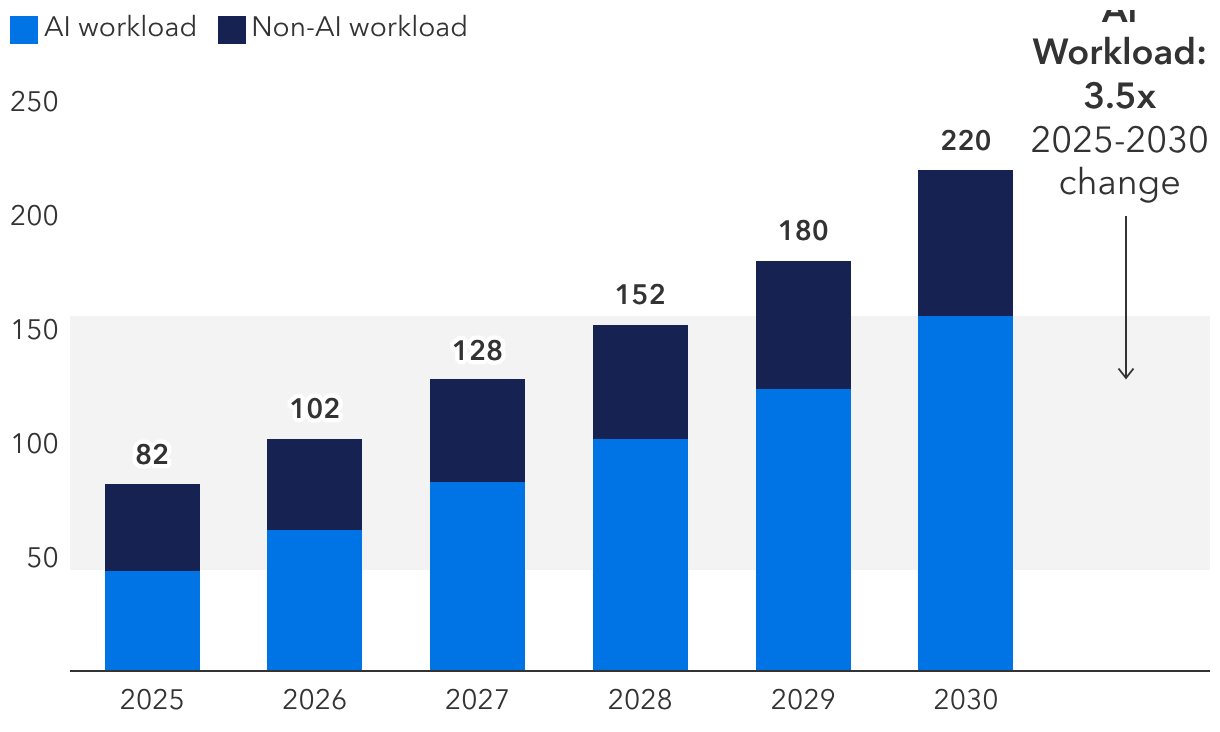

Today, hyperscalers believe building more data centres is essential to expanding AI inference, or the ability to run generative AI models for everyday use. As Franz puts it, the infrastructure is required to take on more AI workloads tied to training and inference, with the latter requiring reliable, always-on computing to serve users in real time. "From an AI workloads perspective, the physical infrastructure needs to get built first before it can be populated with graphic processing units (GPUs), networking and storage to run the next generation of large language models."

Thus, investors pay close attention to the performance of new AI models and their updates, he adds. If gains begin to plateau, that could signal AI demand may not keep pace with spending. Nevertheless, Franz believes that the current capacity hyperscalers being fabricated over the next two years could be repurposed into other businesses if the scaling laws do not hold. “For some companies, there will be demand for that compute even if AI demand slows.”

5. Resource constraints

The availability of electricity has emerged as an urgent issue for AI’s growth potential, according to fixed income analyst Julian James. That is because data centres require memory, power, chips, copper and water. Obstacles in these areas could hamper infrastructure development, slowing capex spending by hyperscalers and pressure development timelines.

“When I met with utility sector CEOs in late 2025, many said that the availability of electricity and the extended timeline for data centres to be connected to the electric grid are the biggest constraints for expansion, which are required for the growth of AI,” he says. “The key bottleneck is a shortage of skilled workers capable of building new power plants and the transmission lines needed to connect new data centres to the grid.”